Globally Consistent Alignment for Planar Mosaic via Topology Analysis

Menghan Xia, Jian Yao*, Renping Xie, Li Li, and Wei Zhang

School of Remote Sensing and Information Engineering, Wuhan University, Wuhan, Hubei, P.R.China

*EMail: jian.yao@whu.edu.cn *Web: http://cvrs.whu.edu.cn

[ ] The C++ source codes are released [download] (09/07/2016).

] The C++ source codes are released [download] (09/07/2016).

1. Abstract

Over last decade, many image mosaicking methods have been proposed in robotic mapping and remote

sensing applications. However, most of they mainly focus on the optimizing problem of minimizing the registration error, which

can't necessarily guarantee the global consistency of the mosaicking result, especially in the case of wide-range pesudo-planar

scenes under the threat of perspective distortions.

In this paper, we propose a topology analysis based generic framework

for globally consistent alignment of images from planar scenes, capable of resisting perspective distortion meanwhile

preserving local aligning accuracy. For topology estimation, we search for an main chain connecting all images over an

fast built similarity table of image pairs (mainly for unordered image sequence), along which potential overlapping pairs

are incrementally detected according to the gradually recovered geometry positions. Based on the topological graph, all

the sequential images are organized as a spinning tree through a graphic algorithm, so as to find the optimal reference

image which minimizes the total number of error propagation. With the topology analysis, we perform the global consistent

alignment in an ingenious strategy that images are initially aligned by group via the robust affine model, followed by model

refinement under anti-perspective constraint, through which the optimal balance between aligning precision and global

consistency can be kept. Finally, experiments on several challenging dataset have been designed to illustrate the validity

of the proposed approach.

2. Approach

Aiming at achieving the mosaicking result with both accurate alignment and global consistency, we

propose a generic framework composed of three steps : topology estimation, selection of reference image, and global alignment,

which is suitable for both time-consecutive image set and unordered image set. The flowchart is depicted in

Figure 1.

Figure 1: The flowchart of the proposed approach.

Details of the algorithm are described in the our paper,

which was submitted to the international journal "Pattern Recognition".

3. Experimental Results

3.1. Dataset for experiments

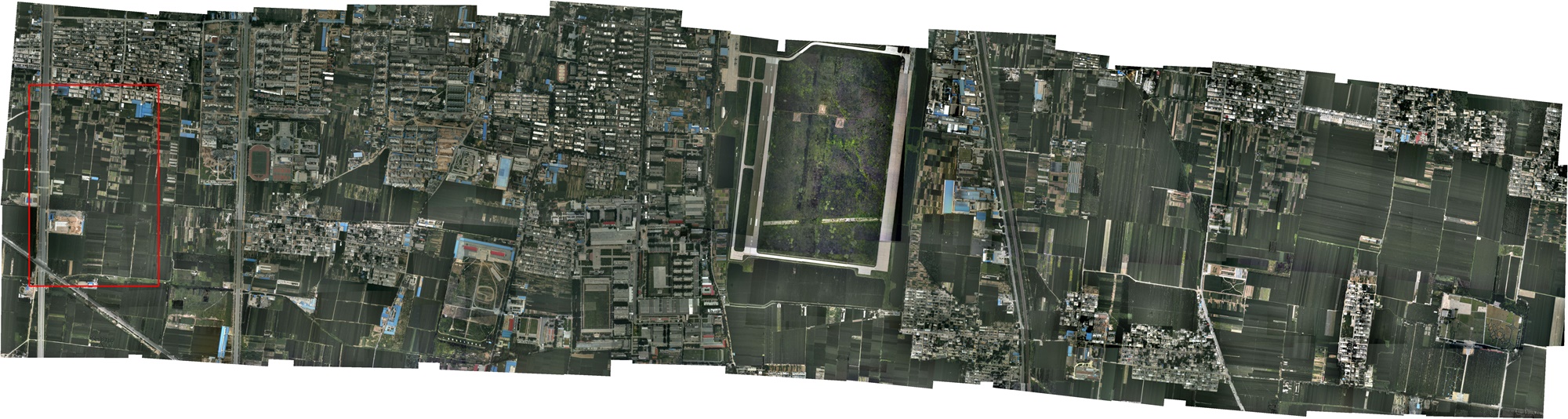

To test the proposed approach sufficiently, two typically aerial image sets are used as the

experimental dataset. The two datasets are acquired from different landforms and by different flight platforms. The first

dataset, consisting of 744 aerial images in 24 orderly strips, is captured at a flight height of about 780 meters over an

urban area. The original images, with a forward overlapping rate of about 60% are down-sampled to the size of 1000×642

in our experiments. The second dataset, consisting of 130 images with the down-sampling size of 800×533, is captured

by an unmanned aerial vehicle(UAV) with a forward overlapping rate of about 70%, which observes an area of suburb with

mountains.

Part of the aerial sequence images and the UAV sequence images are listed in Figure 2 and

Figure 3 respectively.

Figure 2: Thumbnails of the aerial sequence images.

Figure 3: Thumbnails of the UAV sequence images.

3.2. Evaluation on Selection of Initial Models

In the period of obtaining initial alignment, the selection of the transformation model

among rigid, affine and homographic models can make some differences to the final mosaicking result. To amplify the

influence of error factors, we specially selected a strip-shaped aerial image subset and a block UAV image subset

from the first dataset and the second dataset respectively, and the image on the end is set as the reference image.

The comparative analyses are made on both alignment precision and global consistency, where the numerical results are

shown in Table 1. while the global consistency can be judged via the visual results shown in Thumbnail

Table 2..

Numerical results:

Table 2: RMS of the reprojection errors via different transformation models

chosen for initial alignment (GR: Global Refinement; Unit: pixel).

Initial Models

Aerial Images

UAV Images

Matches

RMS

RMS(GR)

Matches

RMS

RMS(GR)

Rigid

131279

3.142

1.247

48783

5.112

1.985

Affine

131279

2.825

1.117

48783

4.421

1.743

Homographic

131279

2.459

0.808

48783

3.605

1.485

Visual results (Click the Thumbnails to view the original images ):

Table 2: Thumbnails of the mosaicking results via different

transformation models

on the aerial images and the UAV images.

3.3. Comparative Evaluation on Mosaicking

In this section, the final mosaic results of our approach are evaluated in both qualitative

and quantitative forms. Firstly, we compared our approach with a commercial software named

PTGui. Aiming at comparing the result of alignment

only, the following seamline detection and tonal correction are skipped in PTGui and our image stacking order is made

consistent with that of PTGui.The visually comparative results of mosaicking aerial images and UAV inages are illustrated

in Figure 4 and Figure 5 respectively.

Notice that the marked regions are some typical parts depicting the difference between the two mosaicking results.

You can view the original images by click the images.

Besides, the corresponding topological graphs are depicted in Figure 6, where the edges in green and

gray indicate the number of matched features between image pairs over and less than 100 respectively. Specially, the

spinning tree generated from searching for the optimal reference image is marked with red edges, where the centre node

represents the reference image.

(a)

(b)

Figure 6: Topological graphs recovered by the proposed approach for the aerial images

(a) and UAV images (b).

4. Supplementary Experiments

To evaluate the mosaicking result quantitatively, we select a UAV image set whose DOM at

the precision of 0.2 meters is available as the test data, which is composed of 62 images with the down-sampled size of

1500×1001. The DOM is a kind of orthophoto map, which is produced by a set of strict procedure in photogrametry. Here,

the DOM image is used as the ground-truth for reference. The mosaicking result and DOM of the corresponding area are

depicted in Figure 7. For a quantitative evaluation, 21 corresponding well-distributed check points were

manually selected in both the ground-truth and the mosaic image. A four degrees-of-freedom 2D similarity transformation

model was used to align the two sets of check points. The distribution of these check points after aligned into a common

coordinate system is displayed in Figure 8 with the Root-Mean-Square (RMS) error of

2.508m. Note that value of aligning errors are converted to metric units using the ground

resolutions of the ground-truth images.

(a)

(b)

Figure 7: The DOM of the observing area (a) and the mosaicking result via the

proposed approach (b).

(a)

Figure 8: Distribution of the check points where the red ones are from the DOM and the green ones

are converted from the mosaic image.

Dataset and Codes

- Aerial Dataset (744 Images): /projects/PlanarMosaicking/data/Aerial744.zip

- UAV Dataset (130 Images): /projects/PlanarMosaicking/data/UAV300.zip

- Yongzhou City Dataset (61 Images): /projects/PlanarMosaicking/data/YongzhouCity.zip

- Algorithm Codes: C++ Source Code

Citation

Over last decade, many image mosaicking methods have been proposed in robotic mapping and remote sensing applications. However, most of they mainly focus on the optimizing problem of minimizing the registration error, which can't necessarily guarantee the global consistency of the mosaicking result, especially in the case of wide-range pesudo-planar scenes under the threat of perspective distortions.

In this paper, we propose a topology analysis based generic framework for globally consistent alignment of images from planar scenes, capable of resisting perspective distortion meanwhile preserving local aligning accuracy. For topology estimation, we search for an main chain connecting all images over an fast built similarity table of image pairs (mainly for unordered image sequence), along which potential overlapping pairs are incrementally detected according to the gradually recovered geometry positions. Based on the topological graph, all the sequential images are organized as a spinning tree through a graphic algorithm, so as to find the optimal reference image which minimizes the total number of error propagation. With the topology analysis, we perform the global consistent alignment in an ingenious strategy that images are initially aligned by group via the robust affine model, followed by model refinement under anti-perspective constraint, through which the optimal balance between aligning precision and global consistency can be kept. Finally, experiments on several challenging dataset have been designed to illustrate the validity of the proposed approach.

Aiming at achieving the mosaicking result with both accurate alignment and global consistency, we propose a generic framework composed of three steps : topology estimation, selection of reference image, and global alignment, which is suitable for both time-consecutive image set and unordered image set. The flowchart is depicted in Figure 1.

|

| Figure 1: The flowchart of the proposed approach. |

Details of the algorithm are described in the our paper, which was submitted to the international journal "Pattern Recognition".

3. Experimental Results

3.1. Dataset for experiments

To test the proposed approach sufficiently, two typically aerial image sets are used as the

experimental dataset. The two datasets are acquired from different landforms and by different flight platforms. The first

dataset, consisting of 744 aerial images in 24 orderly strips, is captured at a flight height of about 780 meters over an

urban area. The original images, with a forward overlapping rate of about 60% are down-sampled to the size of 1000×642

in our experiments. The second dataset, consisting of 130 images with the down-sampling size of 800×533, is captured

by an unmanned aerial vehicle(UAV) with a forward overlapping rate of about 70%, which observes an area of suburb with

mountains.

Part of the aerial sequence images and the UAV sequence images are listed in Figure 2 and

Figure 3 respectively.

Figure 2: Thumbnails of the aerial sequence images.

Figure 3: Thumbnails of the UAV sequence images.

3.2. Evaluation on Selection of Initial Models

In the period of obtaining initial alignment, the selection of the transformation model

among rigid, affine and homographic models can make some differences to the final mosaicking result. To amplify the

influence of error factors, we specially selected a strip-shaped aerial image subset and a block UAV image subset

from the first dataset and the second dataset respectively, and the image on the end is set as the reference image.

The comparative analyses are made on both alignment precision and global consistency, where the numerical results are

shown in Table 1. while the global consistency can be judged via the visual results shown in Thumbnail

Table 2..

To test the proposed approach sufficiently, two typically aerial image sets are used as the experimental dataset. The two datasets are acquired from different landforms and by different flight platforms. The first dataset, consisting of 744 aerial images in 24 orderly strips, is captured at a flight height of about 780 meters over an urban area. The original images, with a forward overlapping rate of about 60% are down-sampled to the size of 1000×642 in our experiments. The second dataset, consisting of 130 images with the down-sampling size of 800×533, is captured by an unmanned aerial vehicle(UAV) with a forward overlapping rate of about 70%, which observes an area of suburb with mountains.

Part of the aerial sequence images and the UAV sequence images are listed in Figure 2 and Figure 3 respectively.

|

Figure 2: Thumbnails of the aerial sequence images.

|

Figure 3: Thumbnails of the UAV sequence images.

In the period of obtaining initial alignment, the selection of the transformation model among rigid, affine and homographic models can make some differences to the final mosaicking result. To amplify the influence of error factors, we specially selected a strip-shaped aerial image subset and a block UAV image subset from the first dataset and the second dataset respectively, and the image on the end is set as the reference image. The comparative analyses are made on both alignment precision and global consistency, where the numerical results are shown in Table 1. while the global consistency can be judged via the visual results shown in Thumbnail Table 2..

Numerical results:

chosen for initial alignment (GR: Global Refinement; Unit: pixel).

| Initial Models | Aerial Images | UAV Images | ||||

| Matches | RMS | RMS(GR) | Matches | RMS | RMS(GR) | |

| Rigid | 131279 | 3.142 | 1.247 | 48783 | 5.112 | 1.985 |

| Affine | 131279 | 2.825 | 1.117 | 48783 | 4.421 | 1.743 |

| Homographic | 131279 | 2.459 | 0.808 | 48783 | 3.605 | 1.485 |

Visual results (Click the Thumbnails to view the original images ):

on the aerial images and the UAV images.

3.3. Comparative Evaluation on Mosaicking

In this section, the final mosaic results of our approach are evaluated in both qualitative

and quantitative forms. Firstly, we compared our approach with a commercial software named

PTGui. Aiming at comparing the result of alignment

only, the following seamline detection and tonal correction are skipped in PTGui and our image stacking order is made

consistent with that of PTGui.The visually comparative results of mosaicking aerial images and UAV inages are illustrated

in Figure 4 and Figure 5 respectively.

Notice that the marked regions are some typical parts depicting the difference between the two mosaicking results.

You can view the original images by click the images.

In this section, the final mosaic results of our approach are evaluated in both qualitative and quantitative forms. Firstly, we compared our approach with a commercial software named PTGui. Aiming at comparing the result of alignment only, the following seamline detection and tonal correction are skipped in PTGui and our image stacking order is made consistent with that of PTGui.The visually comparative results of mosaicking aerial images and UAV inages are illustrated in Figure 4 and Figure 5 respectively.

Notice that the marked regions are some typical parts depicting the difference between the two mosaicking results. You can view the original images by click the images.

Besides, the corresponding topological graphs are depicted in Figure 6, where the edges in green and gray indicate the number of matched features between image pairs over and less than 100 respectively. Specially, the spinning tree generated from searching for the optimal reference image is marked with red edges, where the centre node represents the reference image.

(a)

(b)

Figure 6: Topological graphs recovered by the proposed approach for the aerial images

(a) and UAV images (b).

4. Supplementary Experiments

To evaluate the mosaicking result quantitatively, we select a UAV image set whose DOM at

the precision of 0.2 meters is available as the test data, which is composed of 62 images with the down-sampled size of

1500×1001. The DOM is a kind of orthophoto map, which is produced by a set of strict procedure in photogrametry. Here,

the DOM image is used as the ground-truth for reference. The mosaicking result and DOM of the corresponding area are

depicted in Figure 7. For a quantitative evaluation, 21 corresponding well-distributed check points were

manually selected in both the ground-truth and the mosaic image. A four degrees-of-freedom 2D similarity transformation

model was used to align the two sets of check points. The distribution of these check points after aligned into a common

coordinate system is displayed in Figure 8 with the Root-Mean-Square (RMS) error of

2.508m. Note that value of aligning errors are converted to metric units using the ground

resolutions of the ground-truth images.

(a)

(b)

Figure 7: The DOM of the observing area (a) and the mosaicking result via the

proposed approach (b).

(a)

Figure 8: Distribution of the check points where the red ones are from the DOM and the green ones

are converted from the mosaic image.

Dataset and Codes

- Aerial Dataset (744 Images): /projects/PlanarMosaicking/data/Aerial744.zip

- UAV Dataset (130 Images): /projects/PlanarMosaicking/data/UAV300.zip

- Yongzhou City Dataset (61 Images): /projects/PlanarMosaicking/data/YongzhouCity.zip

- Algorithm Codes: C++ Source Code

Citation

|

(a) |

|

(b) |

Figure 6: Topological graphs recovered by the proposed approach for the aerial images (a) and UAV images (b).

4. Supplementary Experiments

To evaluate the mosaicking result quantitatively, we select a UAV image set whose DOM at the precision of 0.2 meters is available as the test data, which is composed of 62 images with the down-sampled size of 1500×1001. The DOM is a kind of orthophoto map, which is produced by a set of strict procedure in photogrametry. Here, the DOM image is used as the ground-truth for reference. The mosaicking result and DOM of the corresponding area are depicted in Figure 7. For a quantitative evaluation, 21 corresponding well-distributed check points were manually selected in both the ground-truth and the mosaic image. A four degrees-of-freedom 2D similarity transformation model was used to align the two sets of check points. The distribution of these check points after aligned into a common coordinate system is displayed in Figure 8 with the Root-Mean-Square (RMS) error of 2.508m. Note that value of aligning errors are converted to metric units using the ground resolutions of the ground-truth images.

|

(a) |

|

(b) |

Figure 7: The DOM of the observing area (a) and the mosaicking result via the proposed approach (b).

|

(a) |